Have a look at the following few image:

What if I tell you that these are all generated by providing some text? Will you believe?

Oh you do believe. But do you know what kind of research went behind these types of tech and how it recently exploded on the internet leading to the wave of Generative AI ?

Today we will summerize in simple words how the paper "Ho, J., Jain, A. and Abbeel, P., 2020. Denoising diffusion probabilistic models. Advances in Neural Information Processing Systems, 33, pp.6840-6851." talks about and how Text to image and hence imagination is turning to a reality pushing us closer to a more creative computational world.

What is a diffusion model?

A diffusion model is a type of mathematical model that describes the spread or diffusion of a substance or quantity over time and space. In the context of machine learning, diffusion models are generative models that learn the probability distribution of a dataset by iteratively diffusing noise through the data. It is similar to how gas molecules diffuse from high density to low density areas. This is important because noise can cause a loss of information, similar to how entropy or heat death occurs in thermodynamics.

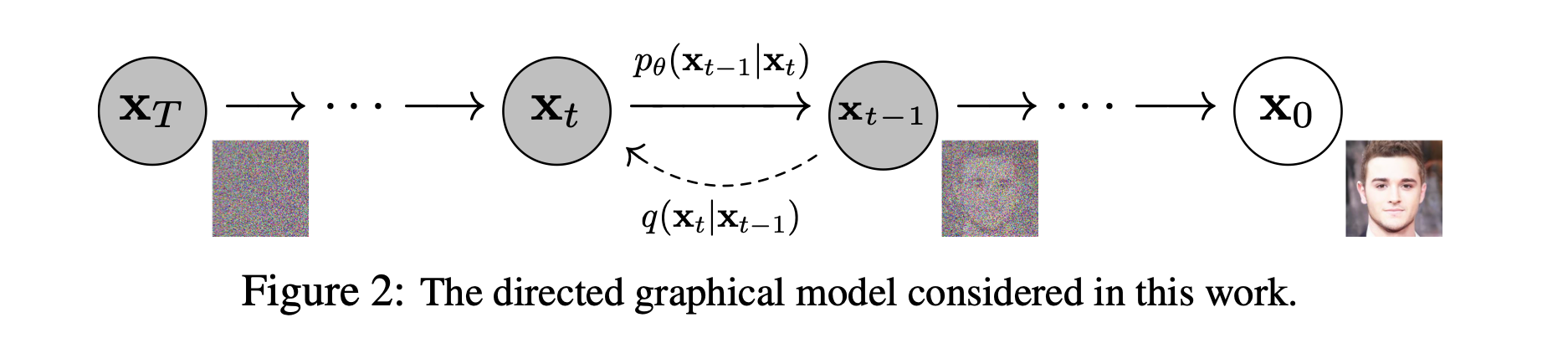

Diffusion models use a Markov chain process to simulate the diffusion of noise through the data. At each step of the process, the model applies a transformation to the data that reduces the amount of noise. This transformation is typically learned from the data using maximum likelihood estimation or some other optimization method.

What is a Markov Chain Process?

A Markov chain process is a mathematical model that describes a sequence of events or states, where the probability of each event or state depends only on the previous state. In other words, the future state of the process depends only on the current state and not on any previous states.

The key idea behind diffusion models is that by understanding how information decays due to noise, we can use that knowledge to reverse the process and recover lost information. This is done by building a model that simulates the diffusion of noise through data using a Markov chain process, and then using that model to "decode" the data by removing the noise in a hierarchical manner.

A denoising diffusion modeling is a two step process:

- the forward diffusion process; and

- the reverse process or the reconstruction

In the forward diffusion process, the data is gradually corrupted with Gaussian noise at each step until the data becomes all noise. This step simulates how noise can gradually build up in data over time. The aim is to learn how the noise affects the data and how it can be removed in the reverse reconstruction process. Unlike an encoder in the VAEs it doesn’t require a training.

The reverse reconstruction process involves undoing the noise by learning the conditional probability densities of the original data given the noisy data using a neural network model. The network model is trained to predict the original data from the noisy data. This step is crucial as it allows us to recover the original data that was lost due to noise.

The model used for training diffusion models is similar to a VAE network, but simpler. The model takes the input data and processes it through multiple hidden layers using activation functions, which learn the relationship between the input and output.

The final layer of the network is designed to reconstruct the original data from the noisy data. In a denoising diffusion network, the final layer has two separate outputs that represent the mean and variance of the predicted probability density. This information is used to remove the noise from the data and recover the original information.

Although diffusion models require more computational resources than other deep learning architectures, they often perform better in certain applications, such as text and image synthesis.

For example, recent studies have shown that diffusion models can generate more realistic images and text than other deep learning architectures. This is because diffusion models are better at modeling how noise affects data, and can therefore remove noise more effectively. This can lead to higher quality generated data, which is important for applications such as image and text synthesis.

The potential applications of this technology are vast and awe-inspiring. From creating lifelike virtual environments for gaming and movies to generating images for online shopping sites, DDPMs are transforming the way we think about image generation and synthesis.

As the world of DDPMs and machine learning continues to evolve, we can expect to see even more awe-inspiring applications in the future. So if you've been blown away by high-quality images online lately, now you know the powerful technology behind them.

Explain like I am five:

This paper is about a special computer program that can make really cool pictures. The program works by adding some "dirt" or "noise" to the picture, like a spot on a shirt. Then the program uses some math to take away the dirt and make the picture look clean again.

Scientists can use this program to make pictures of things that don't really exist, like a chair that looks like an avocado! They just describe the chair with words, and the program makes a picture of it.

The program is really good at making pictures that look like real life, but even better. It can be used for lots of things, like making video games and movies more realistic, or making pictures for shopping websites.

More reading:

We research, curate and publish daily updates from the field of AI.

Consider becoming a paying subscriber to get the latest!