If you like our work, please consider supporting us so we can keep doing what we do. And as a current subscriber, enjoy this nice discount!

Also: if you haven’t yet, follow us on Twitter, TikTok, or YouTube!

What is Stable Diffusion?

Machine learning models that convert text into images take a natural language description as input and produce an image that matches that description. The development of such models began in the mid-2010s due to advances in deep neural networks. By 2022, state-of-the-art text-to-image models, such as OpenAI's DALL-E 2, Google Brain's Imagen and StabilityAI's Stable Diffusion, began to approximate the quality of real photographs and human-drawn artwork.

Stable diffusion is a text-to-image AI approach which uses latent diffusion to generate images. It has been popularized by Stability.ai

When was it announced and when was it released?

It was announced on 10th August and was publicly released on 22nd Aug.

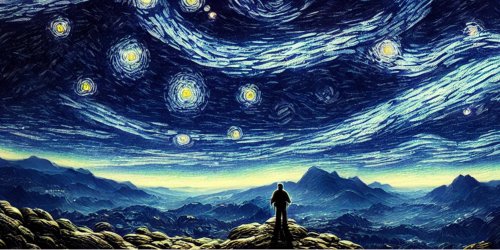

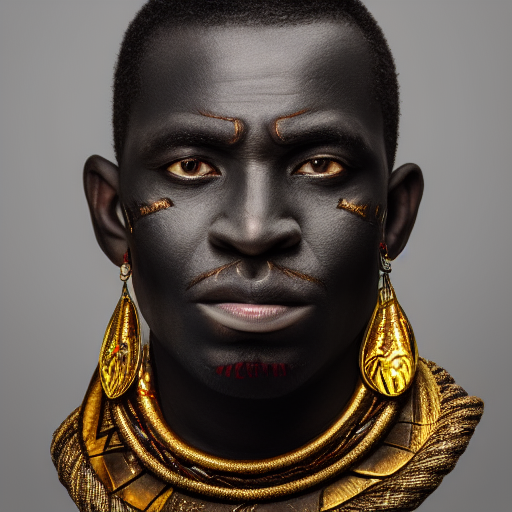

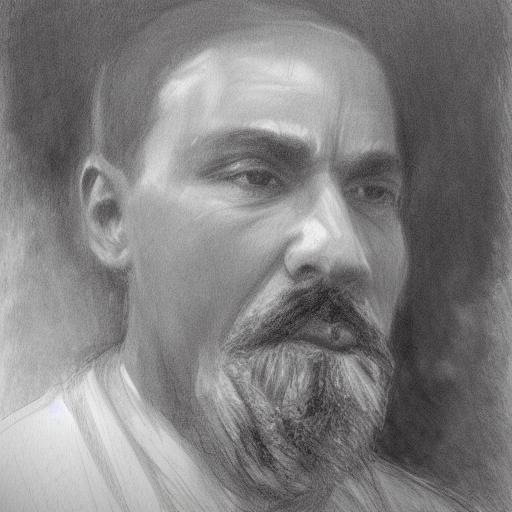

What kind of images does it generate?

We have shared various images generated by Stable diffusion in previous posts. However, you can see the following gallery as some of the examples of images that are generated by the model.

How can I run it on my own computer?

If you own Mac with an M1 or M2 chip, you can use the following git repo and get your own hands on the copy of the software and be as creative as you can.

CHARL-E packages Stable Diffusion into a simple app. No complex setup, dependencies, or internet required — just download and say what you want to see.

You can also download the package from the website by Charlie Holtz.

Try various prompts and explore how creative you are in your thought process. You can learn how to use a prompt from my previous post here:

Do you like our work?

Consider becoming a paying subscriber to support us!