The competition

In the calm and quiet of Dr. Silverman’s lab, a place alive with the gentle hum of machines and the soft rhythm of keyboards, Rohit entered with an air of excitement that immediately caught the attention of his mentor. Dr. Silverman, known for his deep explorations into AI research, looked up from his work, sensing the eagerness in Rohit's approach.

"Dr. Silverman," Rohit began, his eyes bright with enthusiasm, "I wanted to share about the annual innovation event that's got all the neighboring villages talking. It’s a significant event here, and the whole village is abuzz with anticipation."

Dr. Silverman set aside his notes, intrigued. "Tell me more about this event, Rohit."

"It's a competition where each village presents an innovative project that could benefit the wider community," Rohit explained. "The winning village receives a grant to implement their solution across all participating villages. It’s not just about winning; it’s about making a real impact."

"Our village hasn’t clinched victory in this competition for several years," Rohit continued, a hint of resolve in his voice. "But this year, everyone is committed to their projects, aiming to bring the title home. There’s a renewed sense of unity and purpose that’s genuinely inspiring."

Dr. Silverman nodded, visibly impressed. "What’s your plan for the competition, Rohit?"

"I'm working on leveraging a Large Language Model with our local data to devise a solution that could substantially help our community," he said. "However, I'm exploring various LoRA techniques for fine-tuning the model, and I could really use your guidance on this."

Dr. Silverman’s expression turned thoughtful, filled with a mix of pride and curiosity. "That’s a commendable project, Rohit. Let’s dive into the world of LoRA techniques together and find the best approach to optimize your project for the competition."

As Rohit settled into his seat, his notebook ready for a flurry of notes, he knew he was about to embark on a learning journey filled with valuable insights. Dr. Silverman, with his extensive knowledge, was more than a mentor; he was a beacon leading the way to a world of possibilities that could potentially lead their village to success.

Low Rank Adaptation

Dr. Silverman's lab was a haven of technological innovation, a place where ideas took flight. As Rohit laid out his project plans across the lab table, Dr. Silverman leaned in, intrigued by the young student’s ambition.

"So, you’re planning to use a Large Language Model (LLM) for your project. That's a bold move, Rohit," Dr. Silverman commented.

Rohit nodded, "Yes, Dr. Silverman. But I’m exploring LoRA techniques for fine-tuning the model, and I need your help to understand them better."

Dr. Silverman, always eager to impart knowledge, began, "Let’s start with the basics. LoRA, or Low Rank Adaptation, is like fine-tuning an intricate clock. It involves adjusting a model's weights with a low rank decomposition, a process that modifies the existing structure without overhauling it completely."

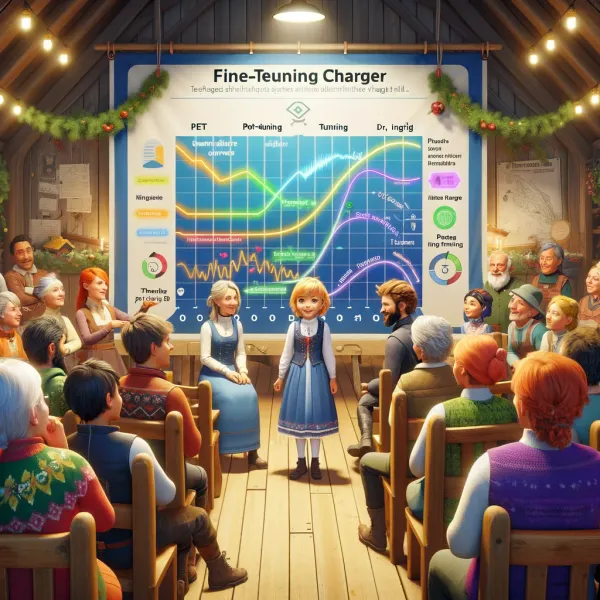

"Then there’s QLoRA," Rohit chimed in, "which focuses on model quantization to reduce memory usage, right?"

"Exactly," Dr. Silverman replied. "QLoRA uses 4-bit quantization on pretrained model weights. Imagine compressing a book into a summary without losing its essence. It saves memory while maintaining performance."

"What about QA-LoRA and LoftQ?" Rohit asked, pen poised over his notepad.

"QA-LoRA is an extension. It combines the efficiency of fine-tuning with quantization, applied during training and inference," Dr. Silverman explained. "LoftQ studies a similar approach, applying quantization and LoRA finetuning at the same time."

Rohit’s interest peaked. "And LongLoRA?"

"It’s designed to adapt LLMs to longer context lengths, using sparse local attention. Think of it as extending the range of a telescope to see further, yet more clearly," Dr. Silverman illustrated.

"And S-LoRA?" Rohit inquired further.

"S-LoRA efficiently manages multiple LoRA modules on a single GPU. It’s akin to running multiple complex applications on your computer simultaneously without any hiccups," the professor elucidated.

As they delved deeper, Dr. Silverman explained other variants like LQ-LoRA, MultiLoRA, LoRA-FA, Tied-LoRA, and GLoRA. Each had its unique application, like different tools in a toolkit, each tailored for specific tasks.

With each explanation, Rohit’s understanding deepened. The complexities of LoRA techniques unfolded like a map, guiding him through the labyrinth of AI fine-tuning.

"I feel much more confident about choosing the right LoRA technique now," Rohit said, a sense of clarity in his eyes.

As the meeting concluded, Dr. Silverman felt a sense of accomplishment. Not only had he guided Rohit through the intricacies of LoRA, but he had also ignited a spark of innovation in his student.

"Remember, Rohit," Dr. Silverman said as Rohit gathered his notes, "innovation is about finding the right tools and knowing how to use them effectively. Your project has great potential."

Rohit left the lab, his mind buzzing with ideas and possibilities, ready to tackle his project with newfound knowledge and insight.

- Low Rank Decomposition: Imagine you have a huge, complex puzzle. Low rank decomposition is like finding a simpler way to understand and solve this puzzle without using all the pieces. In AI, it means simplifying complex data or models in a way that they still work effectively but are easier to manage.

- Quantization: In AI, quantization is like taking a detailed, high-quality photograph and reducing its size so it takes up less space on your computer, but you can still recognize the picture clearly. It involves reducing the complexity of a model's information (like its weights) to make it use less memory and run faster.

- Pretrained Model: Think of a pretrained model like a well-trained pet that can already do a lot of tricks. It's an AI model that someone else has already trained on a large amount of data, so it knows how to do a lot of tasks. You can further train this model on your specific task, like teaching your pet a new trick.

- Fine-Tuning: This is similar to giving a car a tune-up. Fine-tuning an AI model means making small, precise adjustments to improve its performance on a specific task, much like tuning a car to perform better or more efficiently.

- Parameter-Efficient Finetuning: Imagine you want to customize a car but can only change a few parts. Parameter-efficient finetuning is about making significant improvements to an AI model by only tweaking a small part of it, making the process quicker and less resource-heavy.

- Group-Wise Quantization: It's like organizing a large group of people into smaller teams so it’s easier to manage them. In AI, group-wise quantization divides the model's information into groups and then simplifies each group separately, making the whole model more efficient.

- GPU (Graphics Processing Unit): This is like the engine in a gaming console that makes the games run smoothly with high-quality graphics. In AI, a GPU is a powerful processor that helps run complex AI models quickly and efficiently.

- Tensor Parallelism: Imagine a group of people working together to quickly solve a large puzzle by dividing it into smaller sections. Tensor parallelism is a way to make AI models run faster by breaking down tasks into smaller parts and processing them simultaneously.

| Variant | Description |

|---|---|

| LoRA | Models weight update during finetuning with low rank decomposition. Leaves pretrained layers fixed and injects trainable rank decomposition matrix. |

| QLoRA | Applies model quantization techniques to reduce memory usage, using 4-bit quantization on pretrained model weights. |

| QA-LoRA | Combines parameter-efficient finetuning with quantization (group-wise quantization during training/inference). |

| LoftQ | Similar to QA-LoRA, applies quantization and LoRA finetuning simultaneously. |

| LongLoRA | Adapts LLMs to longer context lengths using a parameter-efficient (LoRA-based) finetuning scheme with sparse local attention. |

| S-LoRA | Serves thousands of LoRA modules on a single GPU, managing memory and batching LoRA computations efficiently. |

| LQ-LoRA | More sophisticated quantization scheme within QLoRA, adaptable to target memory budgets. |

| MultiLoRA | Handles complex multi-task learning scenarios more effectively. |

| LoRA-FA | Freezes half of the low-rank decomposition matrix to reduce memory overhead. |

| Tied-LoRA | Leverages weight tying to improve parameter efficiency. |

| GLoRA | Extends LoRA to adapt pretrained model weights and activations to each task with an adapter for each layer. |

Enjoyed unraveling the mysteries of AI with Everyday Stories? Share this gem with friends and family who'd love a jargon-free journey into the world of artificial intelligence!